Technology deep dive (but don’t be put off if you are a non-technical person, we will keep it high-level)

Last week we discussed how time limits and the likelihood for error make manual redaction very challenging, and why even automated redaction is a difficult nut to crack, at least with traditional search methods, and “Regular Expressions” (see last week’s blog on Redaction challenges).

This week we will discuss how it can be solved, with AI. But not just any AI. AI is a rather fluid label. Some people even claim that techniques like Regular Expressions constitute AI. Whilst regex certainly has a use case, it has major downsides for redaction purposes, as discussed last week.

Machine Learning and Large Language Models.

The key technology to be used is machine learning. But again, not just any machine learning. You need a technique that uses the whole, unabridged text, as the context of the information to be found (and redacted) is key to finding (and redacting) that information. We have found that the optimal way this can be reliably and accurately done is by using “Large Language Models” (the technology underlying ChatGPT).

What is a Large Language Model (LLM)?

Essentially an LLM is a Neural Network, trained on a large body of text (e.g., Wikipedia, Common Crawl etc.). LLMs predict the next word (or a word in between), given its preceding (or surrounding) sequence of words and sentences. They are trained to do that by leaving words out, to fill in the blanks, so to speak. You may know the so-called Cloze test, which tests your ability to fill in the blanks, e.g. “monkeys like to … bananas“. You could say that LLMs are trained to ace that test!

As a result, these models can understand the patterns of languages, such as grammar and style (which makes ChatGPT so eloquent!), but also, if enough training data is used, semantics and logic (which makes ChatGPT able to produce informative text, though not always accurately. Interesting read on that: ChatGPT is no stochastic parrot. But it also claims that 1 is greater than 1). These models have as additional advantage that they are trained without the need for humans to “label” the data first. And that makes it feasible to train LLMs on very large amounts of data with relatively low human effort (other than very smart data scientists! And of course, huge computer resources!).

How we use LLMs at Imprima

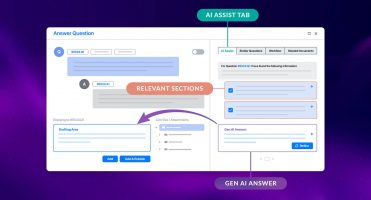

At Imprima, we use an LLM that we have customised for the purpose of redaction, and trained on documentation typically found in DD. We have trained it in multiple languages, and it predicts across languages. Accuracy in one language is increased through the utilisation of training data in other languages working in conjunction with each other.

It is designed and trained to categorise words (or other “tokens” such as numbers, etc.) so it can subsequently find the words that, in the redaction use case, are to be redacted. This has several advantages. As it uses the context of the words to be redacted, it relies on the meaning of the words to be redacted, rather than on the exact words themselves. Therefore, it enables you to bypass the major disadvantage of traditional techniques such as search or Regular Expressions (see last week’s blog post.)

That said, its overarching advantage is its accuracy and reliability, which goes far beyond what any other automated redaction can do. We have shown that in a previous blog post (Smart VDRs – it is all about accuracy), and we will discuss further in the next blog post.

Conclusion

At Imprima, we believe that LLMs can really transform the Due Diligence process, not only for mundane tasks such as redaction, but also to extract key data relevant for DD (our Smart Summaries tool is a first step towards the goal). Though many worry about AI’s potential threats to humanity (Runaway AI Is an Extinction Risk, Experts Warn ), others mostly focus on its benefits for business (Can AI help you solve problems?), and so do we.

To end on a lighter note: AI is not a nuclear weapon, even though the rhetoric seems to sometimes indicate that (a quiz recently published in the NYT is testament to that: A.I. or Nuclear Weapons: Can You Tell These Quotes Apart?)

Next week we will discuss how accurate and reliable LLM-based redaction is, by showing results on actual DD data, and how it works in practice.

Stay tuned!

Are you looking for a VDR with fully integrated redaction software which leverages AI? Speak to our sales team or check out our Smart Redaction page here.