Virtual Data Rooms (VDR) have transformed the way M&A takes place, providing both security and convenience to the transaction. With the now extensive capabilities VDRs offer, from document management, audit trails, detailed user permissions and Q&A functionality, to advanced reporting, and in some VDRs AI-based redaction, the VDR is an essential element of nearly all M&A transactions today. (Interested in learning about the history of VDRs – read an earlier blog here).

More recently, with the rise of automated DD tools that can automatically identify and extract specific clauses and key data from contracts (AI-DD tools), reviewers can now conduct the exercise with increased speed and accuracy.

While there are several options for implementing AI-DD tools, we will explain that it is key to utilise a tool that is part of the in-built functionality of the VDR (“VDR-Native AI”) as opposed to tools that are “integrated” with the VDR via an API.

Why?

Because when you use two platforms connected via an API link (one being the VDR, and the other the AI-DD tool), the data in the VDR is copied over to the AI-DD tool, into a different (and potentially not security certified) document storage system and data base.

Only with VDR-Native AI does the documentation and meta data reside in one platform, only one document storage system is used, and only one data base comes into play (all part of the secure VDR).

Why is that important?

Secure Data Sharing

Organisations that opt for a VDR-Native AI, do so in the knowledge that their data is secured. State-of-the-art VDRs employ advanced encryption techniques and access controls to safeguard data during transit and storage, minimising the risk of interception or unauthorised access, and are ISO-27001 certified.

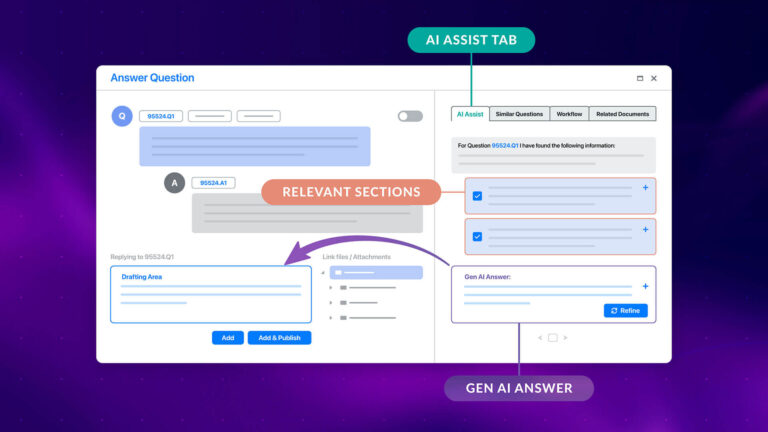

Therefore, the AI-DD tool – native to the VDR – operates within a secure digital environment where access can be tightly controlled. Administrators can assign specific permissions to users, allowing them to view summaries of documents and any notes made, while ensuring that sensitive information remains protected.

Seamless Collaboration

Using VDR-Native AI enables your team to work together collaboratively, in one platform. In the VDR you can access integrated reports, including the AI reports. You can make notes on your colleagues’ findings, and reply to their notes, including the results of the AI analysis. All of this can happen in real time and because users are all working in the same workspace, any findings can be shared with the team instantaneously, so everyone is always on the same page. Having one single source of information, ensures collaboration is organised and reduces the chance of errors or duplication of work. When there is no need to transfer the documentation outside of your secure VDR environment, this streamlines the workflow, saving users time as well as ensuring the security of the documents.

Additionally, both the sell side and the buy side get to make use of the extra insight and efficiency without the need to request or permit download rights, ensuring the documents stay within the VDR.

In conclusion: with VDR-native AI, M&A professionals can benefit from increased time savings, data security and enhanced collaboration.

Are you looking for a VDR with fully integrated AI-DD software? Speak to our sales team or check out our Smart Summaries page here.